Can deep learning recognise emotions from images?

For decades, computers have been masters of logic, calculation, and data storage. But they have always lacked one fundamental human trait: emotional intelligence. When you smile at your laptop, it sees a collection of pixels, not a sign of happiness. When you frown in frustration at a bug, it doesn't know you need help.

This is changing rapidly. A field known as Affective Computing—and specifically the application of Deep Learning to Facial Expression Recognition (FER)—is teaching machines to "see" how we feel. But how accurate is it? Can a neural network really distinguish between a genuine smile and a polite smirk?

The Science of "Seeing" Feelings

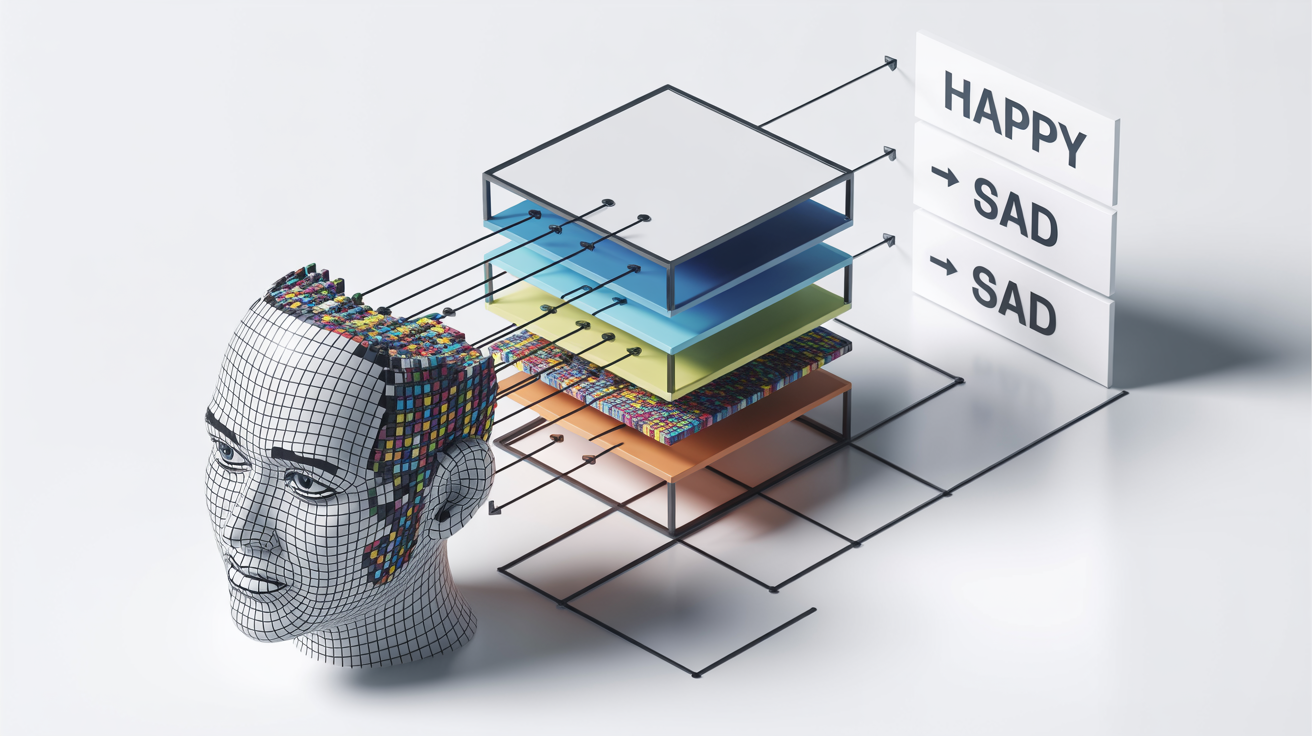

At the core of modern emotion recognition lies the Convolutional Neural Network (CNN). Unlike traditional algorithms that might look for simple geometric rules (e.g., "if mouth corners go up, then happy"), CNNs learn to identify complex, non-linear patterns by analyzing thousands or millions of labeled images.

The process generally works in three stages:

- Face Detection: Locating the face in the image and cropping it.

- Feature Extraction: The deep learning model analyzes textures, shapes, and the relative positions of landmarks (eyes, nose, mouth).

- Classification: The model assigns a probability score to various emotional categories.

The 7 Universal Emotions

Most deep learning models are trained on the theory of "Universal Emotions" proposed by psychologist Paul Ekman. He argued that regardless of culture or language, humans share seven distinct facial expressions:

Cheek raiser, lip corner puller.

Inner eyebrow raiser, lip corner depressor.

Eyebrow raiser, upper lid raiser, lip stretcher.

Nose wrinkler, upper lip raiser.

Brow lowerer, upper lid raiser, lip tightener.

Inner/Outer brow raiser, jaw drop.

By training models on datasets like FER2013 or AffectNet, which contain labeled faces corresponding to these categories, AI can achieve impressive accuracy—often surpassing human performance in controlled environments.

Where Deep Learning Struggles

Despite the hype, "reading minds" via images is incredibly difficult. Deep learning models face several significant hurdles that researchers are still trying to overcome.

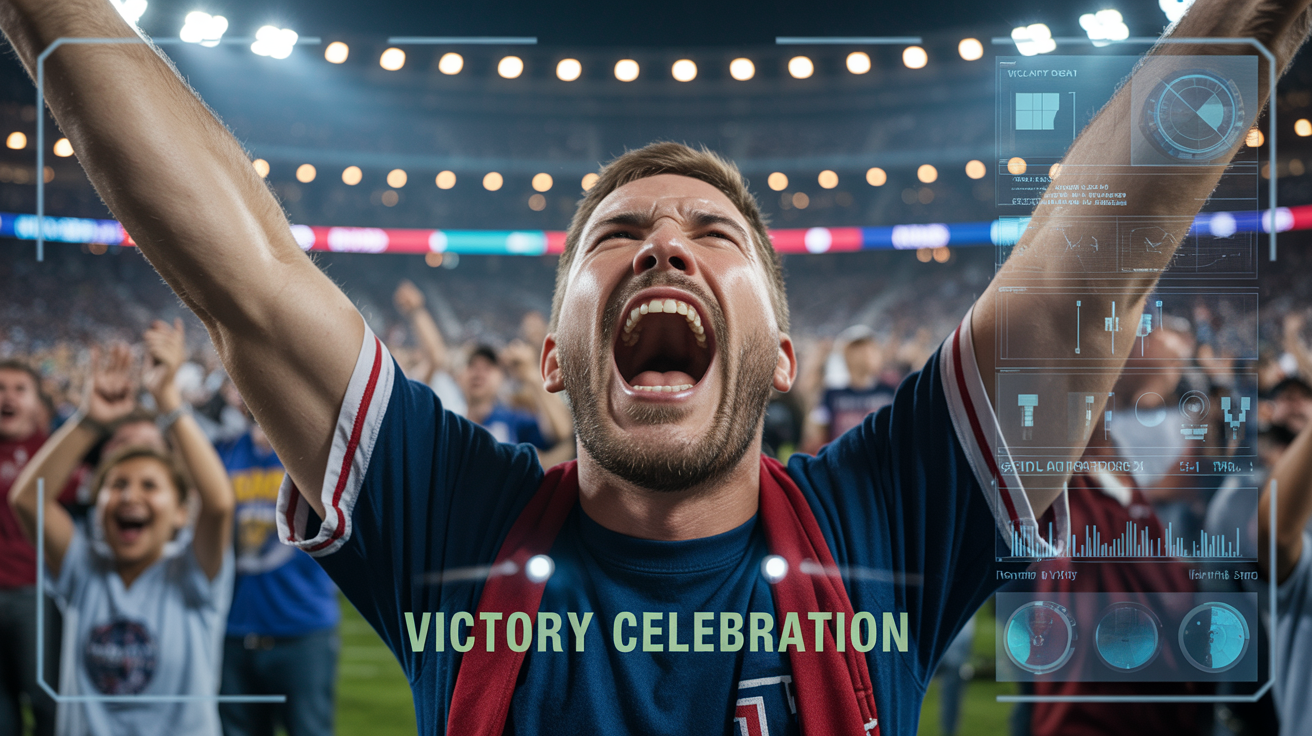

1. The Context Problem

Imagine a photo of a man screaming, eyes wide, mouth open. Is he terrified? Or did his favorite football team just score a winning goal? Without context, facial expressions are ambiguous. Modern "Multimodal" models are now trying to analyze the entire scene—body language, background, and audio—to make a correct judgment.

2. Micro-expressions

True emotions often flash across our faces for a fraction of a second (1/25th of a second) before we mask them. Standard video analysis often misses these "micro-expressions," capturing only the posed or social mask we put on afterwards.

Real-World Applications

Why are companies investing billions into this technology? The use cases are transformative.

Automotive Safety

Modern cars use interior cameras to monitor driver fatigue or "road rage," alerting the driver to take a break if they detect drowsiness or extreme stress.

Healthcare & Therapy

Apps can help children with autism learn to recognize emotional cues, or assist therapists in tracking the progress of patients with depression by analyzing facial flatness over time.

Market Research

Instead of asking focus groups "Did you like this ad?", brands can measure the involuntary emotional reaction of viewers frame-by-frame.

The Ethical Frontier

The ability to detect emotions raises serious privacy concerns. Should a billboard be allowed to analyze your mood to serve you a specific ad? Should your boss be notified if you look "bored" during a Zoom meeting?

Furthermore, bias is a major issue. If a model is trained primarily on one demographic, it may consistently misinterpret the expressions of people from other ethnicities or cultures. As we deploy these systems, transparency and consent must be paramount.

"We are moving from an era of 'Information Technology' to an era of 'Emotion Technology'. The devices of the future will not just be smart; they will be empathetic."

Deep learning has proven that it can recognize emotions from images, often with startling accuracy. The question for the next decade isn't just about improving that accuracy—it's about deciding how we want this new layer of digital empathy to shape our lives.

Ready to upgrade your workflow?

Join thousands of power users who trust AI Workspace to organize their prompts and conversations securely.

Install for Free